The page walks you through the complete process of setting up a testbed for wireless network emulation.

The process is split into discrete steps which partially build on previous steps.

Quicklinks to all steps:

- Step 1: Familiarize yourself with our physical setup

- Step 2: Perform a basic setup of the gateway server and nodes

- Step 3: Set up the gateway server

- Step 4: Set up the nodes

- Step 5: Set up time synchronization between the gateway and nodes

- Step 6: Read up on the technical background

- Step 6: Have a look at a simple scenario file

- Step 7: Perform a simple emulation

Step 1: Familiarize yourself with our physical setup

The wireless network emulation functionality is an extension of our previous emulation

facilities sharing the physical setup and basic mechanisms. We encourage you to have a look at the architecture, our hardware and instructions on how to set up the basic system to gain a deeper understanding of the base system. Nonetheless, we provide the most important details below.

Our testbed consists of 20 Banana Pi Routers (BPI-R1, also called Lamobo R1) which

are in two subnets at the same time. One subnet is for emulation purposes, the other

one is for management and logging. The subnets are organized as star topology,

which can be modified (restricted) to resemble an arbitrary network topology consisting of links

with different characteristics like bandwidth, delay and loss (using iptables, tc-tbf and netem).

A dedicated gateway server manages the emulation process, collects the logs and serves as a gateway

for the BananaPi Routers (which are from now on called nodes).

All nodes have a user called nfd.

The management network (gateway nodes) subnet is 192.168.0.*/24 with 192.168.0.10 being the first node’s IP. The emulation network (node other nodes) subnet is 192.168.1.*/24.

Step 2: Perform a basic setup of the gateway server and nodes

- Set up a Linux distribution on a machine to serve as gateway server.

- Set up a Linux distribution on at least one node and connect the node to the gateway server.

- Test if you can log in to the node from the gateway using SSH.

Although we use BananaPi-R1s, the emulation framework is not bound to a specific set of hardware.

The nodes should be able to be in two networks at the same time (e.g. have two physical network

interfaces) in order to be able to use the framework without modifications.

We insist on Linux because of the Linux tools that are used in the emulation process.

If your nodes are BananaPi-R1s, you can use the system images we provide in our download section. Our download section additionally provides links to GitHub repositories containing the newest version of the emulation framework (+ example scenarios) as well as an artifacts bundle with the code/results used in the work on the conference paper.

We recommend setting up the gateway and two nodes using this step by step guide, performing a test emulation and once satisfied, creating a system image of one of the nodes for replication amongst all other nodes. This should save time and act as backup while ensuring a homogeneous working environment on all nodes.

Step 3: Set up the gateway server

The gateway server is running Ubuntu 16.04 LTS. All listings are tested to work with this OS/version, but very well might work also with other or newer distributions.

To ease and automate the installation process, we will be using the lightweight open source configuration management tool Ansible.

# Install ansible using a ppa (Ubuntu 16.04) sudo apt-add-repository ppa:ansible/ansible sudo apt-get update sudo apt-get install ansible ansible --version

We additionally recommend creating and deploying an SSH-key as to access the nodes from the gateway server more easily.

ssh-keygen # if key does not already exists ssh-copy-id nfd@192.168.0.10 ssh-copy-id nfd@192.168.0.11 ...

Next, install git and clone a repository containing the Ansible setup scripts.

We choose ~/emulation to be the parent directory for all emulation-related files.

sudo apt install git mkdir ~/emulation cd emulation git clone https://github.com/theuerse/emulation_lib-example-scenarios.git cd emulation_lib-example-scenarios/ansible_host_setup/platform/

Change the file hosts to correspond to your network setup.

# platform/hosts ... [nodes] 192.168.0.10 ansible_user=nfd 192.168.0.11 ansible_user=nfd

As mentioned above, the user on our nodes is called nfd, if this differs from your setup, please change the following files accordingly:

- platform/hosts

- platform/roles/node/tasks/main.yml

- example.config.py (the config file(s) referenced by scenario files)

You may test the connection by entering the platform directory and issuing:

ansible -m ping nodes ansible -m shell -a 'hostname' nodes

We now use Ansible to install all necessary packages.

# Run the playbook and provide the sudo password for the gateway ansible-playbook -K playbook_gateway.yml

The emulation library (emulation_lib) on the gateway server needs some Python3 libraries, which can be set up easily using a virtual environment.

python3 -m venv ~/emulation/emu-venv source ~/emulation/emu-venv/bin/activate pip install wheel pip install paramiko pip install python-igraph

Before starting emulations, this virtual environment must be activated first (setting shell environment vars) using:

source ~/emulation/emu-venv/bin/activate

Additionally, the location of the emulation_lib must be added to the $PYTHONPATH

environment variable prior to launching emulations. A non persistent way of doing

this is issuing:

export PYTHONPATH=$PYTHONPATH:~/emulation

Step 4: Set up the nodes

We will be using Ansible for this part as well. Run one of the following playbooks and provide the sudo password for the nodes. Many of the example-scenarios depend on udperf applications to be installed on the nodes (a custom traffic generator akin to Iperf), if you wish to execute these, please execute playbook_nodes_plus_udperf.yml.

cd ~/emulation/emulation_lib-example-scenarios/ansible_host_setup/platform # Basic node setup (allows for executing the hello world example) ansible-playbook -K playbook_nodes.yml # Basic node setup + udperf application ansible-playbook -K playbook_nodes_plus_udperf.yml

Additionally, some changes to the /etc/sudoers file on the nodes are necessary in order to be able to call the following commands without providing the appropriate sudo password for the user nfd (Users of the OS Raspbian usually do not have to do this, as Raspbian runs all programs requesting elevated privileges as such without asking for passwords.)

# We strongly advise to use the application visudo to perform the changes instead of a normal text editor sudo visudo # Minimal line allowing necessary operations to be performed without entering the sudo password: nfd ALL=(ALL) NOPASSWD: /sbin/tc, /usr/bin/chrt, /sbin/iptables, /usr/bin/killall

The application cmdscheduler on the nodes is a vital part of the emulation framework.

You may test the basic operational capability of the cmdscheduler by logging in on any node and performing:

cd ~/emulation/emulation-cmdscheduler cmdScheduler examples/example.cmd

You may perform a preliminary test of the installation by executing test.sh on one of the nodes.

# Copy file to a node: cd ~/emulation/emulation_lib-example-scenarios/ansible_host_setup scp test.sh nfd@192.168.0.10:~/emulation/ # on the node: cd ~/emulation sh test.sh # Example output (positive): # RTNETLINK answers: No such file or directory # SETUP COMPLETE # cleaning up ... # pid 3856's current scheduling policy: SCHED_OTHER # pid 3856's current scheduling priority: 0 # CLEANUP COMPLETE

If you intend to use link command backends which utilize IFBs (Intermediate Functional Blocks, an advanced feature), you have to ensure that at least one IFB-device (ifb0) is loaded / usable before starting the emulations. To check if the IFB module can be loaded, try to run test_ifb.sh with sudo.

# Copy file to a node: cd ~/emulation/emulation_lib-example-scenarios/ansible_host_setup scp test_ifb.sh nfd@192.168.0.10:~/emulation/ # on the node: cd ~/emulation sudo sh test_ifb.sh # Acceptable outputs are: # 1: (ifb module is available and could be loaded) # # ifb ... # # ... # 2: (in case ifb is built/loaded as part of the kernel) # modprobe: FATAL: Module ifb is builtin. # At least ifb0 should show up in # #1: lo: mtu 65536 qdisc noqueue state UNKNOWN mode DEFAULT group default qlen 1000 # link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 #2: eth0: mtu 1500 qdisc pfifo_fast state UP mode DEFAULT group default qlen 1000 # link/ether b8:27:eb:db:8a:52 brd ff:ff:ff:ff:ff:ff #3: ifb0: mtu 1500 qdisc pfifo_fast state UNKNOWN mode DEFAULT group default qlen 32 # link/ether 5e:0d:23:1a:f2:c0 brd ff:ff:ff:ff:ff:ff #4: ifb1: mtu 1500 qdisc pfifo_fast state UNKNOWN mode DEFAULT group default qlen 32 # link/ether 76:ba:3d:e4:e5:ed brd ff:ff:ff:ff:ff:ff #

Step 5: Set up time synchronization between the gateway and nodes

In order for the emulation to work properly, the system clocks of the nodes have to be synchronized with the one of the gateway server. The folder emulation_lib-example-scenarios/timesync_setup contains the instructions and config files of our setup.

This time synchronization is intended to be used in a Local Area Network and with the gateway server acting as a local time server. We use the modern NTP implementation chrony.

The gateway (192.168.0.1), acting as local time server and the nodes (192.168.0.x, clients of the local time server) have to have the NTP daemon chrony installed and running.

sudo apt install chrony

Be sure to get chrony in version 3.1-5 or up (the provided config files are based on this version).

You may want to remove the possibly preinstalled NTP daemon from the nodes:

sudo apt-get remove ntp

Create a copy of the default chrony configuration file on the gateway server and the nodes:

sudo mv /etc/chrony/chrony.conf /etc/chrony/chrony.conf.bak

Modify the config files to suit your network (see examples further below) and update / replace the default configuration with the modified configurations:

# on the gateway sudo cp gateway/chrony.conf /etc/chrony/chrony.conf # on the nodes (if nodes/chrony.conf has been copied to the nodes first) sudo cp nodes/chrony.conf /etc/chrony/chrony.conf

Logged in on a node, you may check the status of the timesync using:

chronyc tracking # example output: # Reference ID : C0A80002 (192.168.0.1) # Stratum : 11 # Ref time (UTC) : Tue Aug 14 12:56:24 2018 # System time : 0.000000522 seconds fast of NTP time # Last offset : +0.000001665 seconds # RMS offset : 0.000001106 seconds # Frequency : 65.088 ppm fast # Residual freq : +0.000 ppm # Skew : 0.015 ppm # Root delay : 0.000104 seconds # Root dispersion : 0.000041 seconds # Update interval : 12.2 seconds # Leap status : Normal

Examples of chrony configuration files for the gateway server and the nodes:

# # gateway/chrony.conf # # It is notable here, that we have not defined an external time server and # fully rely on the local clock (avoid jumps due to adjustments to external # time server: "local stratum 10"). # You may want to change "allow 192.168.0/24" to be the subnet interconnecting # the gateway and the nodes. # Welcome to the chrony configuration file. See chrony.conf(5) for more # information about usuable directives. #pool 2.debian.pool.ntp.org iburst local stratum 10 # This directive specify the location of the file containing ID/key pairs for # NTP authentication. keyfile /etc/chrony/chrony.keys # This directive specify the file into which chronyd will store the rate # information. driftfile /var/lib/chrony/chrony.drift # Uncomment the following line to turn logging on. #log tracking measurements statistics # Log files location. logdir /var/log/chrony # Stop bad estimates upsetting machine clock. maxupdateskew 100.0 # This directive enables kernel synchronisation (every 11 minutes) of the # real-time clock. Note that it can’t be used along with the 'rtcfile' directive. rtcsync # Step the system clock instead of slewing it if the adjustment is larger than # one second, but only in the first three clock updates. makestep 1 3 allow 192.168.0/24

Note that we entered no timeserver for the gateway server as to avoid occasional (micro) jumps in the gateway server’s time and with it a need for the nodes to adapt. No problem, if you choose to handle this differently.

# # nodes/chrony.conf # # You may want to change "server 192.168.0.1" to fit the IP of your gateway. # The polling interval is low, allowing for a more accurate time sync. # The higher polling frequncy is no problem due to the LAN and own timeserver. # Welcome to the chrony configuration file. See chrony.conf(5) for more # information about usuable directives. #pool 2.debian.pool.ntp.org iburst server 192.168.0.1 minpoll 0 maxpoll 2 polltarget 60 maxdelaydevratio 2 xleave # This directive specify the location of the file containing ID/key pairs for # NTP authentication. keyfile /etc/chrony/chrony.keys # This directive specify the file into which chronyd will store the rate # information. driftfile /var/lib/chrony/chrony.drift # Uncomment the following line to turn logging on. # log tracking measurements statistics # Log files location. logdir /var/log/chrony # Stop bad estimates upsetting machine clock. maxupdateskew 100.0 # This directive tells 'chronyd' to parse the 'adjtime' file to find out if the # real-time clock keeps local time or UTC. It overrides the 'rtconutc' directive. hwclockfile /etc/adjtime # This directive enables kernel synchronisation (every 11 minutes) of the # real-time clock. Note that it can’t be used along with the 'rtcfile' directive. rtcsync # Step the system clock instead of slewing it if the adjustment is larger than # one second, but only in the first three clock updates. makestep 1 3

Step 6: Read up on the technical background

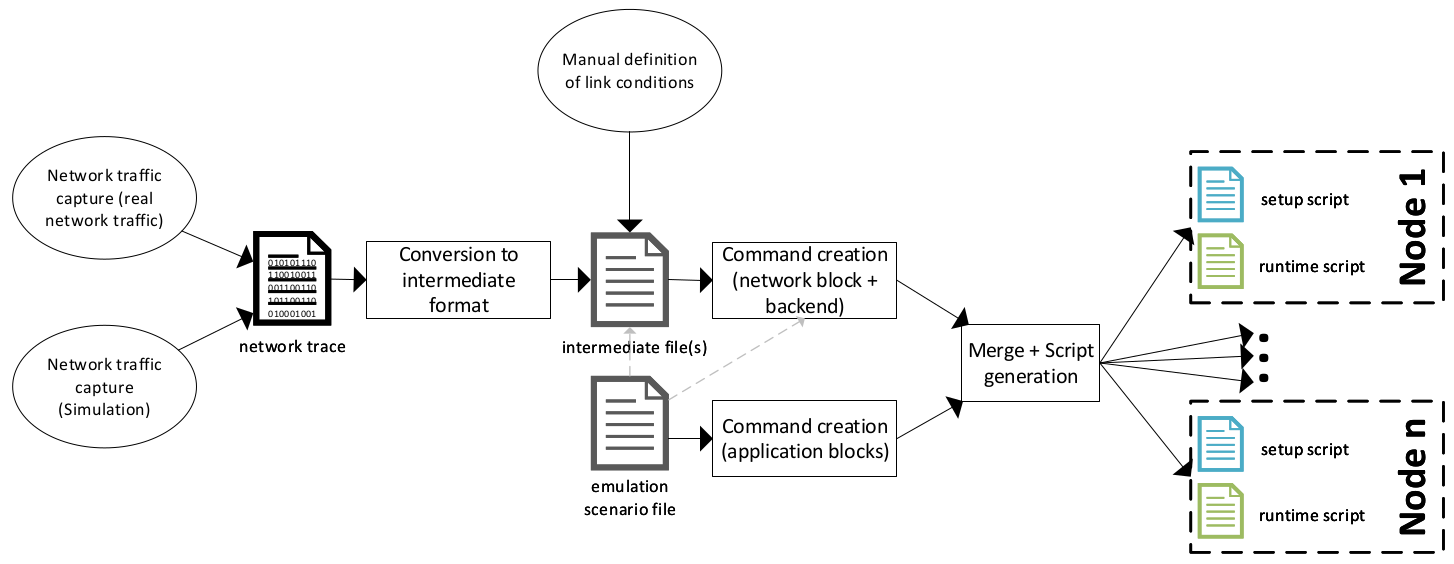

A scenario file (as showcased in the next step) describes a whole emulation, it is a normal Python3 source file and utilizes the emulation_lib to perform individual emulation runs. All instructions ultimately boil down to commands of two types.

- Commands to change transmission properties of links (reachability, bandwidth, delay, loss) in periodic intervals

- “other events”. Examples for “other events” may be the start / stop of applications on nodes (basically arbitrary commands).

In order to improve the reusability and abstract away complexity, the ultimate creation of these commands is performed by so called function blocks (also called blocks). Users can use function blocks (simply python classes) as a “more friendly” interface of command creation with the added benefit of built-in intelligence of those blocks (~ class concept in object oriented programming). Blocks (can) know what configuration files are needed where, they know what to do (commands to achieve something) and they know what results should be gathered from where. Function blocks are always assigned to nodes for which they create the commands.

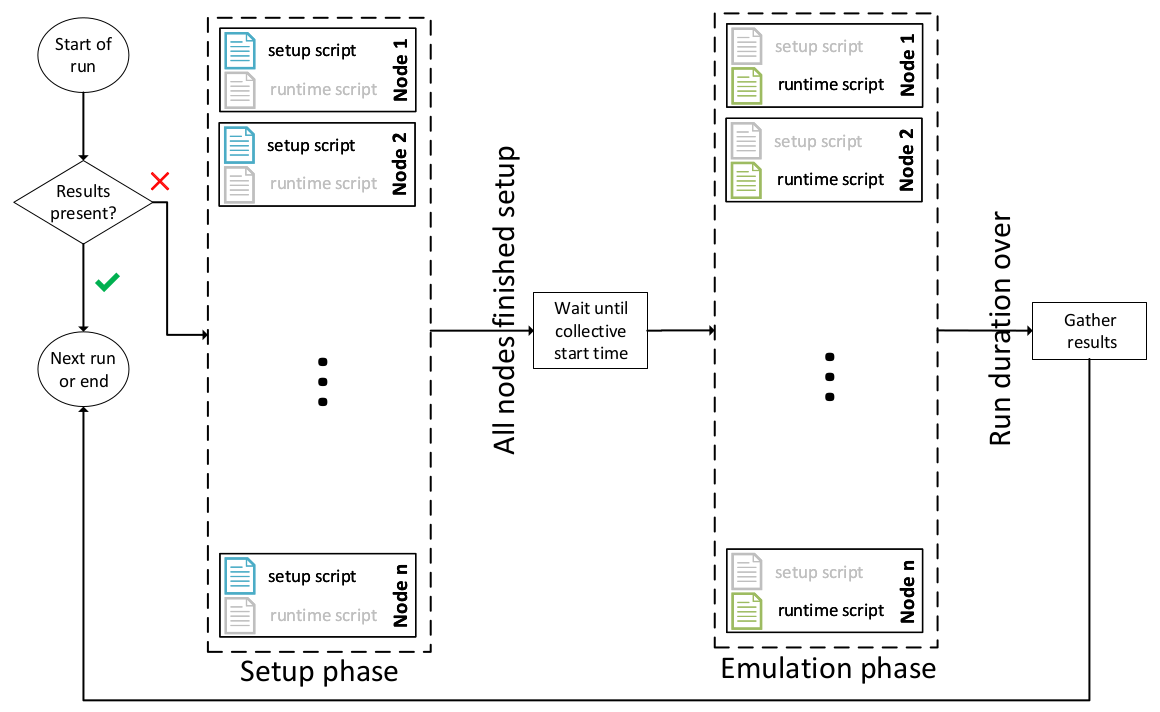

When starting an emulation run, all applications/blocks that are part of the emulation are asked to (re-)generate and add their commands to the nodes they are assigned to (user commands/results are never automatically reset). The intermediate files of the network blocks are copied to the output directory, renamed to match actual nodes and possibly modified by a preprocessing step (means of e.g. mirroring intermediate files). The “link commands” and “other event commands” (e.g. created by application blocks) are merged to form two command files per node. The setup script (initialization commands) is to be executed prior to the emulation, the emulation only starts if all nodes finished their individual setup phase. The runtime script is given to a node-local daemon which executes the respective commands at the given time offsets. The scripts are deployed to the nodes at the beginning of the setup phase. The collective emulation starting time is determined by the emulation_lib on the gateway server after all nodes have finished their setup phases. Then the local daemon applications (cmdscheduler) on the nodes are given the start time at which they start issuing the commands of the runtime script.

After an emulation run has ended (explicit definition of duration), the emulation framework gathers all expected result files from the nodes and places them in a predefined directory on the gateway server. Already completed runs are not performed a second time, which eases the process of performing many (possibly additional, possible re-) runs. The criterion for a run to be considered as completed is that all expected results (apps + user-defined ones) of a run are actually present (files present). This e.g. results in a re-run of emulation runs if a user is to add / change an expected result (by hand or adding / changing an application). Note that already (successfully) performed runs are not repeated, but the command creation process is still performed as to generate a list of expected results.

As there are two basic types of commands (“link commands” and “other commands”), there are two basic types of functional blocks.

Functional blocks concerning the creation to periodically change link properties take so called intermediate files and create commands using one of multiple link command backends. An intermediate file is an intermediate representation of link parameters at a certain point in time (offset from emulation start).

# intermediate file example: start, delay, loss random, rate 0.000000 0.000000 0.00 0.000 1.000000 0.000431 0.00 10738.000 2.000000 0.000486 0.00 12235.184 3.000000 0.000477 0.00 12149.280 ...

Intermediate files can be created by hand, or can be generated from traffic logs/dumps (e.g. gathered by performing a simulation or real world traffic dumps/logs). The intermediate files used in this example were generated based on traffic dumps generated from simulated wireless links (ndnsim/ns3).

When commands are to be created, the intermediate representation is given to one of many backends, which creates the actual commands for changing the link properties. Only the first column in the intermediate file should always be the time offset relative to the start of the emulation, all other columns can differ (be custom in count, order and meaning), but must be supported by the chosen backend. This approach was chosen as to keep this aspect flexible because:

- We cannot foresee all the needs of later users.

- Some methods of link emulation work better/worse in some scenarios.

- We do not claim to have found “the best way” of doing this.

- This flexibility can come handy when comparing ways to emulate link properties.

- Because it simplifies the integration of new mechanisms/technologies.

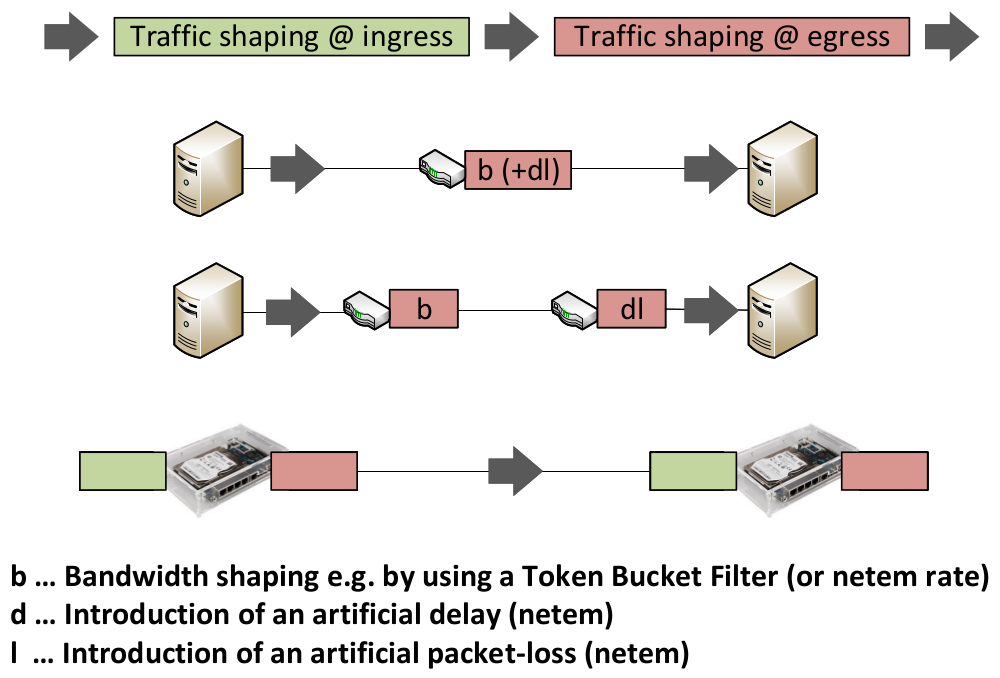

Following illustration shows a selection of ways to perform traffic shaping on wired links. Separating the bandwidth shaping from the emulation of delay/loss is the easiest way to achieve the wanted link characteristics, but requires for more (intermediary) nodes. Omitting intermediate nodes and shaping the bandwidth and delay/loss on the same node / at the same time requires more care regarding the configuration as to avoid adverse interactions in the so created “queuing-hierarchy”. Using Intermediate Functional Blocks (IFBs), incoming traffic can be shaped as well, which opens more possibilities to shape the traffic (to separate responsibilities).

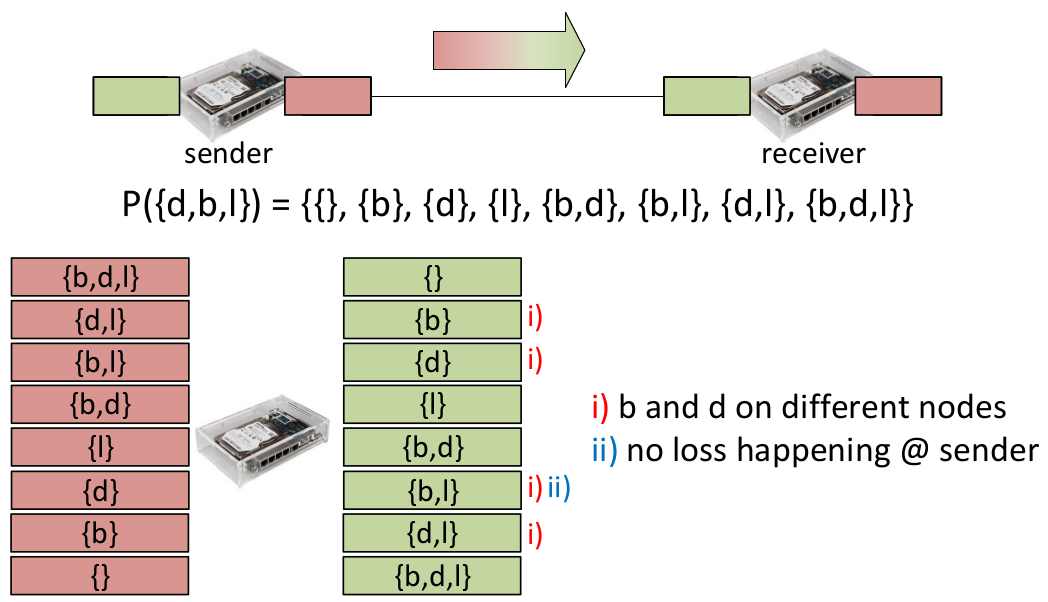

Some possible distributions of the traffic shaping responsibilities for a link are shown in the next image. A (link command) backend takes an intermediate file and creates commands to shape the traffic on the link according to a variation (there are for example backends for e.g. “b_”, “bdl_”, “b_dl”). Not all shown variations make sense / are practical.

Functional blocks concerning the creation of “other events” are usually written by users and cover the life cycle of one or multiple applications on one or multiple nodes (e.g. start, stop, config files, result files). Like the blocks for link property emulation, these blocks usually need to be parameterized by the user. Additionally, the user has the means to schedule the execution of custom commands to be executed and results to be gathered without the need to write an application block.

Step 7: Have a look at a simple scenario file

The following is a commented example of a scenario file which covers a broad range of emulation_lib functionalities. It also utilizes a custom application as an example of how to create own application blocks.

from emulation_lib.emulation import Emulation

from emulation_lib.network_blocks.network_block import NetworkBlock

from emulation_lib.preprocessing.set_constant_value_for_column import SetConstantValueForColumn

from emulation_lib.preprocessing.repeat_file_until import RepeatIntermedFileUntil

from emulation_lib.linkcmd_backends.bdl_ import BDL_

import iperf_logapp

NUM_RUNS = 2

EMULATION_DURATION = 100 # seconds

NUMBER_OF_NODES = 2

#

# general emulation settings

#

emu = Emulation("./example.config.py", list(range(0,NUMBER_OF_NODES)))

emu.setName("HelloWorld")

emu.setLinkCmdBackend(BDL_())

emu.setSecondsBetweenRuns(5)

emu.setOutputDirectory('./results/HelloWorld')

# time in seconds, after which a run is to be considered finished, which triggers the fetching

# of the results and the initiation of the next run

emu.setDuration(EMULATION_DURATION) # seconds

#

# configure network block

#

# the folder containing the pre-calculated intermediate files to be used

WIFI_BLOCK = "./blocks/simple_mobility_802.11G_AdHoc_constant"

# create a new network block object based on the given intermediate file folder

wlan = NetworkBlock(WIFI_BLOCK)

wlan.setNodes(emu.getNodes()[0:NUMBER_OF_NODES]) # assign all two nodes to be part of the network

# select interval of network condition changes

# ./blocks/simple_mobility_802.11G_AdHoc_constant/0_1_1000.txt

# ./blocks/simple_mobility_802.11G_AdHoc_constant/1_0_1000.txt

wlan.selectInterval(1000)

# preprocessing steps are ways to modify intermediate files without changing the originals (experimentation)

# if the given intermediate files were too long, here we could use a preprocessing step to only take the first n seconds

wlan.addPreprocessingStep(RepeatIntermedFileUntil(EMULATION_DURATION, False))

# for this example, we only want to restrict the bandwidth (column #3 in the intermediate file)

# and therefore overwrite the columns for delay (#1) and loss (#2) with zeros using the following preprocessing steps

wlan.addPreprocessingStep(SetConstantValueForColumn("", 1, 0))

wlan.addPreprocessingStep(SetConstantValueForColumn("", 2, 0))

# before (part of 1_0_1000.txt)

# 10.000000 0.001542 0.44 5522.400

# 11.000000 0.001679 0.22 5632.848

# 12.000000 0.002284 1.71 3522.064

# after (part of 1_0_1000.txt)

# 10.000000 0 0 5522.400

# 11.000000 0 0 5632.848

# 12.000000 0 0 3522.064

# add the network block to the emulation

emu.addNetworkBlocks([wlan])

#

# configure the applications to be run

#

# Perform iperf bandwidth measurement (UDP) from client_node to server_node.

# Create a new application functional block, passing node references.

# This application block takes care of the application lifecycle (here starting/stopping an iperf UDP bandwidth test)

# as well as the collection of results.

# server, client, client_runtime[s], client_bandwidth[Mbps]

iperfApp = iperf_logapp.IperfLogApp(server=emu.getNode(0), client=emu.getNode(1),

client_runtime=EMULATION_DURATION-1, client_bandwidth=100)

# add the application to the emulation object

emu.addApplications([iperfApp])

# schedule some arbitrary commands at three time points (second 0, 30 and 90 of the emulation)

for node in emu.getNodes():

node.scheduleUserCmd(0, "date > /home/nfd/cmdExample.txt")

node.scheduleUserCmd(30, "date >> /home/nfd/cmdExample.txt")

node.scheduleUserCmd(90, "date >> /home/nfd/cmdExample.txt")

# Fetch the files created by the previous commands, additionally fetch the logfiles of the command scheduler.

# addUserResult() adds a file to the emulation results that are to be fetched from the nodes.

# The marker "%RUN% is replaced with the actual run-number before the start of the run.

for node in emu.getNodes():

node.addUserResult("/home/nfd/cmdExample.txt", "cmdExample"+ str(node.getId()) + ".log_%RUN%")

node.addUserResult("/home/nfd/cmdScheduler.log", "cmdSched" + str(node.getId()) + ".log_%RUN%")

# remote path local path/name inside "results/"

#

# Execute the runs / start the emulation process

#

for run in range(0,NUM_RUNS):

emu.start(run)

# The call of start() triggers some sanity checks, after passing those, all blocks (wlan and iperfApp) create

# their part of the setup-time and run-time commands needed to perform the emulation.

# The setup commands and runtime commands are wrapped in scripts and deployed to the nodes.

# The nodes execute the setup commands, the emulation waits for ALL nodes the finish their setup phase.

# After this, a common emulation start time is calculated and cmdscheduler instances are started on the nodes (waiting

# for the emulation to start)

# Runs which have been already performed as in "all expected results are present" are skipped.

Step 7: Perform a simple emulation

cd ~/emulation/emulation_lib-example-scenarios/hello-world

Adapt the settings file example.config.py to fit your setup. This important for the emulation to work and to avoid getting “locked out” from the nodes as no management network connections are allowed except between the gateway and the nodes (nodes only “communicate” over the emulation network).

vim example.config.py # commented example of a config file #import os #SSH_USER = "nfd" ... user of the testbed nodes #SSH_PASSWORD = "nfd" ... password of above user #REMOTE_EMULATION_DIR = "/home/nfd/emulation" ... directory on testbed nodes where emulation files temp. stored #REMOTE_CONFIG_DIR = os.path.join(REMOTE_EMULATION_DIR,"config") #REMOTE_RESULT_DIR = os.path.join(REMOTE_EMULATION_DIR,"results") #REMOTE_DATA_DIR = os.path.join(REMOTE_EMULATION_DIR,"data") #MIN_START_TIME_OFFSET = 20 ... time between setup phase completion and begin of emulation run # #GATEWAY_SERVER = "192.168.0.2" ... the IP address of the gateway/managment server/machine #MNG_PREFIX = "192.168.0." ... the subnet address of the nodes used for management #EMU_PREFIX = "192.168.1." ... the subnet address of the nodes used for the emulation #MULTICAST_NETWORK = "224.0.0.1/16" ... (unused, scheduled for removal) #HOST_IP_START = 10 ... begin of IPs in the subnets (last octet of IP) #EMU_INTERFACE = "eth0.102" ... interface name on the nodes (connecting to emulation subnet) # #PI_CONFIG_HZ = 100 ... this and the next ones are parameters for Token Bucket Filter (tc-TBF) #LATENCY = 100 #queue length of tbf in ms and netem (used for link command creation in link cmd backends) #HANDLE_OFFSET=11 #LINK_MTU = 1520 # 1500 according to "ip ad | grep mtu", but 1520 actually works # # subtracted from artifical introduced (target-) delay to (try to) mask the one-way-delay of the physical connection #PHYS_LINK_DELAY_COMPENSATION = 0.000200 # seconds with microsecond precision (e.g. 0.000100 for 100 microseconds)

Now everything should be in place, lets try starting an emulation.

source ~/emulation/emu-venv/bin/activate export PYTHONPATH=$PYTHONPATH:~/emulation python3 hello_world.py

Alternatively, you can utilize the bash script start.sh to start the emulation.

bash start.sh

During the course of the emulation, following directories and artifacts are created:

# |- results/HelloWorld/ # | |- commands/ ... contains the setup- and runtime command file used in the most recent run # | |- intermediates/ ... contains the intermediate files used to emulate the link conditions in the most recent run # | |- results/ ... contains the actual results of the emulation # | |- 192.168.1.10.server.log_0 ... Iperf server log (run #0) # | |- 192.168.1.10.server.log_1 ... Iperf server log (run #1) # | |- 192.168.1.11.client.log_0 ... Iperf client log (run #0) # | |- 192.168.1.11.client.log_1 ... Iperf client log (run #1) # | |- start_times.txt ... Informational overview of the time points marking the begin of the runs # | # |- log.txt ... holds log messages of the most recently started emulation

The Iperf server logs depict the traffic amount per second (bandwidth) measured arriving from the client (sender).